Intro

Ever since I started in all things hax0ring, I knew my path was down the road of exploit development and all things reverse engineering. I was never a "web defacer guy" like some of the friends I started with. This, mixed with the ambition of achieving the discovery of a memory corruption 0-day that's exploitable (hence the name of this blog), has made me go through the path of certifications that are proven to give value on pentesting & exploit development.

I did study like a nerd for these like I have never studied in my life (I was a lazyass-party-goer at Uni). I'm a proud holder of these and got full marks at first try on the three

OS[CPE]{2} certs. Since this path is not so uncommon these days, I felt it would be good to share my opinions and views on such certifications and training, in case anyone is also wondering whether to take them or not. Spoiler: They are all

really worth it.

I was very doubtful of writing this blog post, as it has a big chunk of

my own opinion and not my employers and a bit of bragging, but after talking with some people out there I decided to do it and, if you read between the lines, this might help you deciding to do the same. It wouldn't have been possible to take on the Corelan Advanced training and OSEE in such a short span, if my current employer (SensePost) wouldn't have allowed me to have research time during those exact dates. I'm really grateful for being at such a wonderful company and working along great individuals and team, I seriously mean it! <3

Since I knew these certifications existed, my goal was to take them. I faced it as my own personal quest to achieve them, for which I did have to save quite a lot of money (had to live at my parents' more than they wanted lol). I got offered financial help for a lots of things along the way by the companies I've been into, which I am greatly thankful for, but I felt it was my own personal quest and had to be done that way, which is what I'm now sharing with you. Here we go!

OSCP

Own experience

This certification can really be amazing if you allocate the time needed for it. I decided to book 3 full months for this one and even allocate a week of holidays to have a full blast on the labs.

For this certification, real determination and passion is needed. I have seen a few people here and there just doing the minimal effort to pass it, not really seeking the knowledge and the curiosity that the Offsec certifications require from you. If you are seeking to just have the paper, I think it's totally justifiable if the business requires you to do but, it's such a really good certification that teaches core pentesting concepts to miss out on such a good opportunity.

It is really sad seeing that

they needed to proctor the exams because there is people organised to cheat on the exam. Cheating on such certifications is just hurting the industry on the long run and being plain selfish.

Lab

The determination is key during the lab (and cert itself) and it's not a coincidence that their motto is "Try Harder!". Indeed, throughout the labs you will experience really bad times of sufferance, pain and finally, will leave you feeling humble of how much you still really need to know. Just like in the daily job of a pentester, you will face times where you assume things. The first rule of thumb is not to assume things and actually verify whether it is or it isn't there.

There exists an IRC which I highly recommend to join while doing the exam, because in there, you will meet people and even make good friends in the long run. Also, this IRC serves for discussing techniques and ways of exploitation on different machines. For this, it's up to you to share or ask for help to other students (I call cheating on this), since a lot of people will come to you and ask you how you did X machine or how you compromised Y web app. But that doesn't happen only during certs right? ;)

Finally, no matter how much time you book, you should have a pre-defined way of approaching the lab. By this, I don't mean the tools/techniques to pentest but, more towards thinking what are your goals and what do you need to practice more. In my case, most of the machines and exploits were mor or less known. However, when doing SSH tunnelling I found myself lacking some understanding on how and where the ports are open, how to make it properly if certain settings aren't on and how to use the tools to properly route my packets through the tunnel. So I focused on exploiting the machines that required such skills and, after OSCP to this day, I keep a very solid understanding of tunnels while settling down key pentesting skills.

Exam

It's hard to talk about the exam and not spoiling it but, since there is already a lot of public references on how it is, let's just say it's about practising what you've learned during the labs. Some recommendations that worked for me but, bear in mind that might not work for other people, are the following.

- Stick to the machine you are working on: I found out that jumping onto another box to pwn is just a loss of time and momentum. Whenever I felt I wasn't going on the right path, what I did is just starting from zero on the same machine and most of the time, I found out that it was just a small typo or mistake that wasn't allowing me to continue on further exploitation. There were other situations were I was straight just not doing the right steps and I needed more reading. But starting from zero, let's you not forget about the little details you know about the machine at that moment. Those little details are more likely to be in your "short memory" and if you jump to another box, at least in my case, it's very likely to forget these once you go back.

- Read "everything" in front of you: If you are going to use an exploit and it doesn't work straight out of the box, read the source code and find where it is failing. This is another key pentesting skill. Debugging and finding the spot where the exploit has failed it's often the route to victory in a pentester's job. It will point out whether you actually need to change it or to just use a different attack vector. Furthermore, if you are using some sort of tool, read its documentation or, at least the parts that you are using from the tool. For example, if you use the switch "-sV" from nmap, you need to understand that, yes, it does version checks but you should also know that it has tweakable things such as "intensity" and "debugging". Same goes for any tool you are using, you should be prepared to spend time debugging them and actually seeing what they do under the hood to prove these are doing the work that you expect them to do.

- Breaks are overrated: I know a lot of the people would say otherwise, but for me, having a break for lunch when I felt I was so close to compromise a box is just something that deviated my attention. In fact the only breaks I took where for lunch and sleeping, but only right after compromising the box I was working on.

- Have a mental schedule: One thing I found out was very helpful towards not stressing, was to keep a mental schedule of how much of the exam did I want to have completed during the first half. I didn't fall within schedule even knowing that, the challenge that was supposed to be the easiest for me, was the one that took me the most hours just because of a few typos. The schedule that I had in mind was really not strict, but it helped me keeping the order in which I wanted to do things and a steady pace of work.

Conclusion

It's a really hard and demanding certification for an entry level. It's even more demanding when you really try to make the most out of it and work on 100% of the contents and labs. OSCP really did made me realise that simple is better and I got so much value out of it. In fact, just a few days after completing the exam, I got into projects where I used lots of the techniques learned during the labs and the exam and, to this date, I still use techniques learned during the course.

OSCE

Own experience

Despite what people told me that with 1 month is enough, I wanted to play it on the safe side and booked two months. This one I found it quite different than OSCP in the way it's structured. Where the OSCP prepared me in a straightforward way towards the exam, OSCE is a different story.

I found it the most challenging and hard of the three. It might be because I wasn't as prepared for the other two but, regardless, I felt it was really a change in difficulty from OSCP and in which I had to be the most creative.

Lab

The OSCP does have some guidance as well but, OSCE is based purely on achieving certain goals during the lab (apologies for being cryptic again, I don't want to spoil it). I had some problems during the lab because, despite getting holidays to again have a good go at the labs, turns out that the hotel I was in holidays didn't allow VPN connections... so, the last part of the Lab I had to do it "conceptually" and prepare without having access to it.

On the counter part to OSCP, for me it made no sense to go on IRC for the labs and be there to receive support. If I recall correctly, they ended IRC support when I started OSCE so I had another reason less to go on IRC and, just ask for non-technical help on their support page.

One thing that might be a bit upsetting at the beginning is, how outdated the labs seem when it comes to the software used. But not long into the labs contents, you find yourself learning some tricks and core concepts to guide you into thinking outside of the box. If you are into exploit development, there is a high chance you aren't going to learn new tricks in the lab but, it will surely help practising and testing your exploit dev skills. The Lab is really well structured and sometimes, it even does feel that they are holding your hand through it to later, release your hand and let you abruptly crash into the exam.

One little suggestion that I would give to myself if I could travel back in time is, to do the whole lab as soon as possible, because I tried having a schedule to follow and think of tackling each problem in a certain week and because of this, some unexpected things happened and couldn't be as prepared as I wanted towards the exam.

Exam

|

| OSCE is hard man! |

To this day, I remember OSCE as the hardest exam. I have heard many things about being easier/harder, This depending on who you ask but, in my case, OSCE exam did really stress me out.

I learned tons of things during the exam, even if these are old exploits and ways to attack applications, those are things that still apply nowadays. Most definitely, learned how to think more creatively when it comes to pentesting and crafting shellcodes. To this date, I think I did resolve it in a very different way than the "expected", as I didn't use one of the concepts taught in the labs and rather did my own way. Later I found that the way I came up with during the exam, is a totally different way of solving it; as I compared solutions to some of my mates and they all did it different than mine. If you are curious, let's just say that I solved it in the most convoluted way possible and really didn't think of simpler and more elegant solutions.

I followed the same "beliefs" during this exam than OSCP. Stick to the exercise, don't assume things, starting from the beginning if not being able to achieve it and having a mental schedule. There is one extra suggestion for this exam:

Do proper research: Do not hesitate to do proper research and do a lot of use of your favourite search engine. In order to learn the most out of this certification, the exam is going to be your friend. It will make you work hard and really try hard during the 48 hours it lasts. So make sure to research and learn about the technologies used in the exercises you are facing.

Despite being so hard to me, I had plenty of time to solve the whole exam. However, one of the challenges really gave me a big headache as it was working everywhere I tested it: All the VMs and different OS versions. But during the exam, it only worked twice due to a small mistake. Phew!

Conclusion

OSCE certification has been a must for me when it comes into showing myself that I could keep going on the path of learning at a steady and fast pace. It will teach concepts that will sure help on your daily job as a pentester but don't expect it to be, on the technical side of things, as useful as the OSCP. This one will teach you concepts such as determination and creativity, exactly as they claim on their web. This has been more an eye opener to me than a technical learning experience.

OSEE

Own experience

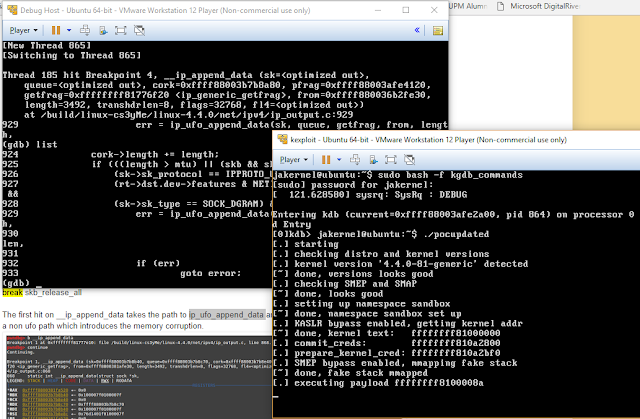

Of all the four experiences I am writing about in this post, this is the one I enjoyed the most. It gave me the opportunity to play with techniques that I only knew in theory but I didn't have the time to play with and, also knowing what is the state of exploit development nowadays.

This certification has made me realise how close I was to the actual state of exploitation and that, the knowledge I built in the last year, was near enough to start "flying" on my own. I definitely was (and I still am!) missing on some skills and pointers but, this is why you pay for the course: to have all the things that would require you time and effort to find and learn, all in one easy to find place.

The feeling of OSEE is that it has given me the push. but I need to not be silly and lose the momentum of that push, because all the skills learned through this certification can easily get outdated.

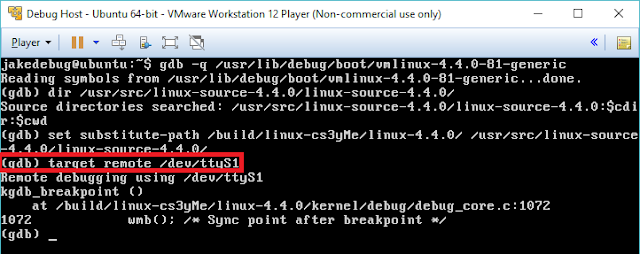

Course/Training/Lab

As they inform in their web, this certification is only accessible if you first book the training. Usually the training is delivered during Black Hat USA or, if you are as lucky as me, they will deliver it in the city you are currently living.

Funny story: When I moved to London, I had in mind taking OSEE as fast as possible but, due to delivering training at Black Hat on the same days as the course was happening, I could not take it (It was a great experience and again I have to thank SensePost for all the investment and trust they put in their employees). But, just a few months after Black Hat, the guys at Offsec decided to do the OSEE course right in London where I'm living at the time. One of my colleagues sent me the link and I didn't hesitate for a moment. Also, the course for OSEE, namely AWE (Advanced Windows Exploitation), gets booked so quickly that it's very hard to get a spot.

For me, the training was a full week of brain melting. The first day and a half were a bit of a refresher but, from the third day I was just like:

INPUT INPUT INPUT. We were lucky to have one extra day, so I can imagine that in Black Hat is far more stressing. The contents of the course are very up to date and current. The things you learn with this certification are things that you can apply to the newest exploits at the time of this writing.

The trainers were Sickness and

Morten Schenk, amazing and well structured trainers. Sometimes hard to follow but, as soon as you have a (non-absurd) question, they were more than happy to answer and give you the pointers on how to grasp the concept. Never spoiling the full solution.

During the week, there are extra-mile exercises. I didn't do the first extra-mile exercise of the course because I was like: "Oh yeah, I have done this a million times, no need to practise it...". But when I saw that the next day they gave stickers to the people that worked on it over night, I felt really stupid, and jealous :) After that lesson, I did all the extra-mile exercises the following days which helped me getting the concepts better and also going to sleep at 2 am a couple of days (and also a sticker and an Alpha card with the Kali logo, yay!).

Finally, if you are curious, the

AWE course syllabus can be accessed here.

Exam

Again, the exam is built around the philosophy of "Try harder!". This one was the easiest one of the three for me, having the necessary points at about a day and a half. However, there is an easy and a hard way of completing one of the challenges so, I decided to also do it in the hard way. This took me the most as I think I over-complicated myself again as I did in OSCE. I took it also as a way of practising concepts that I will need in exploits in the future.

The exam started at 21.00pm and despite telling myself I should first sleep and then start fresh in the morning, I couldn't help it but start and give me a few hours to play with it.

Breaks are overrated pt.2: I just got breaks when either it was time to sleep or when I could feel the mental fatigue. One easy spotter of mental fatigue during the exam was, when I didn't even understand the code I was writing or, when I forgot whatever I was doing just right before pressing Alt+Tab. That's the point where I decided to take a break because I definitely was not having any momentum and the brain needed a reboot: Time for a walk and drink some water.

The exam will boost your confidence around reverse engineering and exploit development techniques as, by the end of it, you'll find yourself again learning new things along the way and, as in all the previous Offsec certs, the concepts and techniques will stick in your brain timelessly.

Conclusion

It can really get addictive and, by the end of it I had the feeling just like Neo in the training center: "

- Want some more? - Oh yes". I believe OSEE certification is a must if you want to follow a path towards exploit development, whether it's a hobby or professionally.

At the point of completion, the only thing out there is your will to learn more and research more towards exploit development. I cannot think of other certifications or trainings that would cover more advanced topics in such a nice way as the OSEE does. Offsec has been around for years now and you can really tell when attending to one of their trainings.

Corelan Advanced

As a final note on certs/trainings towards exploit development let's talk a bit about Corelan Training by Peter Van Eckhoutte. I decided to leave it for the end as this one doesn't have an exam.

I took the Corelan Advanced training right before taking OSEE because I thought it would be a nice step between OSCE and OSEE. It might give the impression of being outdated due to being 32-bit and the exploits not being the bleeding-edge-latest. However, it does teach core concepts from heap managers that can be applied to the latest Windows versions.The few things that change are the mitigations implemented. After the course I do feel way more confident with heap allocators overall.

The training itself is also very demanding and, if you want to get the most out of it, you are going to suffer a good chunk of lack of sleep but, in the last day I was like: I could do this for weeks.

Peter is one of the best trainers I have seen. The way he supports his teaching with drawings is just incredible as it really makes you conceptually see the paths for exploitation. Also, his delivery of contents is top notch and will answer the questions as many times as people need in order to understand these. It's worth noting that in this case, because it had to do a lot with memory structures in Windows, it helped so much just having done a good chunk of research on the

Linux memory allocator.

I was also lucky to be there with three friends. It did help a lot to discuss some of the exercises right after class. Only in the case there was an "after", as the class during the first two days went from 9am 'till 10pm and then, there were exercises to solve at night :)

This course is a logical jump between OSCE and OSEE and Peter is a great individual that shares his knowledge in a swift manner for which I 100% recommend it in case you are also pushing towards exploit development.

Overall Conclusion

We are in the generation that is seeing all this information security thing blowing up and one important thing is to specialise in something as it is very broad. Unluckily for the current generation, there isn't many academic ways to go into such specialisations but these certifications surely help. This generation is also lucky because there is a filter of people that can get into it. Only the really passionate (μεράκι) about the things they do and have a never-ending curiosity finally make it. The so called hackers ;)

As a final note, I asked during the OSEE course, what would be the next steps and the answer was that there is really no golden path towards becoming good as a pentester or exploit developer, it all depends on how much you want it to happen. So, until then, never stop trying harder!